Table of Contents

- 10+ Concurrent Validity Templates in PDF | MS Word

- 1. Concurrent Validity Template

- 2. Concurrent Validity of Questionnaire

- 3. Evaluating Concurrent Validity

- 4. Concurrent Validity of Pearson Test

- 5. Concurrent Validity of Worker

- 6. Concurrent Validity of Internet Addiction Test

- 7. Concurrent Validity Example

- 8. Concurrent Validity of University

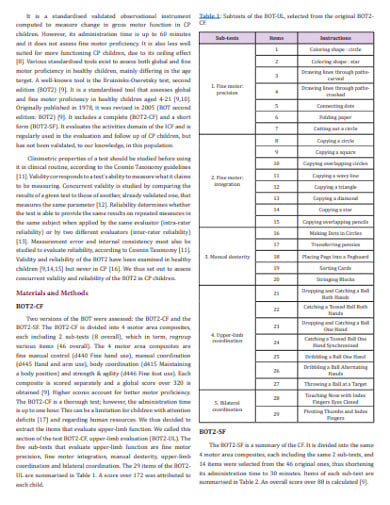

- 9. Concurrent Validity & Reliability

- 10. Standard Concurrent Validity

- 11. Concurrent Validity in DOC

- What is Concurrent Validity and Its Alignment With Classical Conceptions of Validity?

- Types of Validity

FREE 10+ Concurrent Validity Templates in PDF | MS Word

Validity is the degree to which a concept, assumption, or calculation is well-founded and possibly reliably applies to the real world. Concurrent validity is a kind of proof that can be collected to uphold the use of an exam to anticipate other results. It’s a variable used in sociology, psychology, and other behavioral or psychometric sciences. The two methods may be used for the same construction, but more often for separate, but probably connected, builds.

10+ Concurrent Validity Templates in PDF | MS Word

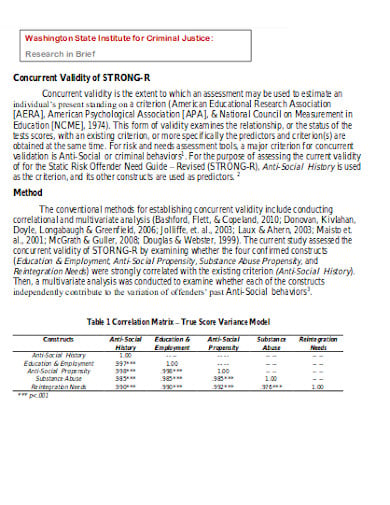

1. Concurrent Validity Template

wsu.edu

wsu.edu2. Concurrent Validity of Questionnaire

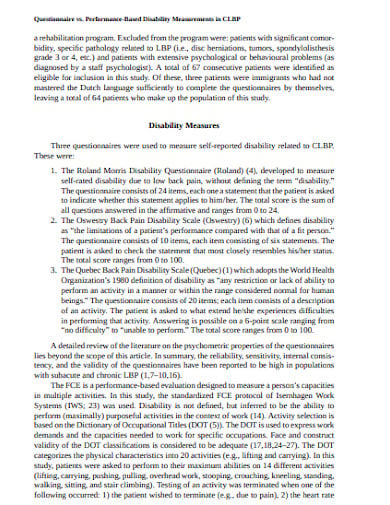

psu.edu

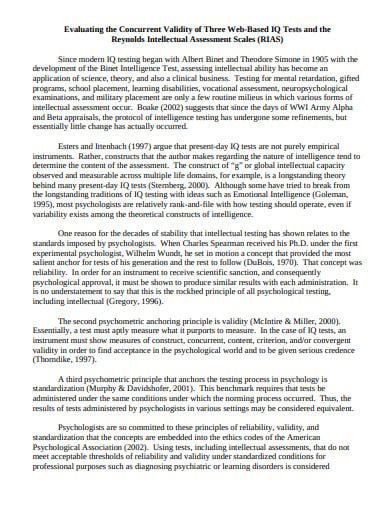

psu.edu3. Evaluating Concurrent Validity

cedarville.edu

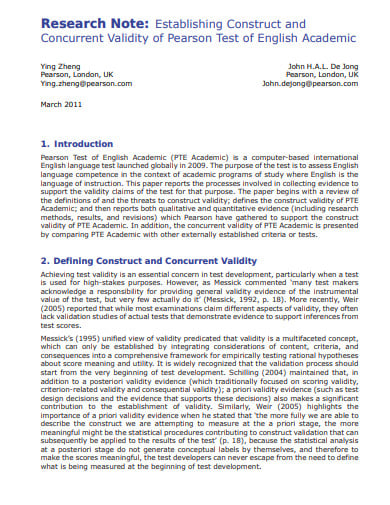

cedarville.edu4. Concurrent Validity of Pearson Test

pearsonpte.com

pearsonpte.com5. Concurrent Validity of Worker

gatech.edu

gatech.edu6. Concurrent Validity of Internet Addiction Test

plos.org

plos.org7. Concurrent Validity Example

valueinhealthjournal.com

valueinhealthjournal.com8. Concurrent Validity of University

utexas.edu

utexas.edu9. Concurrent Validity & Reliability

biomedres.us

biomedres.us10. Standard Concurrent Validity

columbusstate.edu

columbusstate.edu11. Concurrent Validity in DOC

purdue.edu

purdue.eduWhat is Concurrent Validity and Its Alignment With Classical Conceptions of Validity?

Concurrent validity is a kind of proof that can be collected to uphold the use of an exam to anticipate other results. It’s a variable used in sociology, psychology, and other behavioral or psychometric sciences. The two methods may be used for the same construction, but more often for separate, but probably connected, builds. These two measures are taken simultaneously in the study. Predictive validity, in contrast, is where one variable occurs earlier and is supposed to anticipate any later behavior. The predictive capacity (concurrent) of the test, in both cases, is evaluated with the help of a simple correlation or linear regression.

Concurrent validity and predictive validity are the two forms of criterion validity. The distinction between simultaneous validity and predictive validity lies solely in the time the two tests are implemented. Concurrent validity refers to validation research and practices which prescribe the two measures approximately simultaneously. For example, a job test may be given to a group of workers, and afterward, the scores may be compared with the reviews obtained the same day or the same week by the workers’ supervisors. The resulting association would be a derivative of concomitant validity. Such a form of proof could be used to justify employ test use for potential employee selection.

Concurrent validity can be used as a functional alternative to predictive validity. From the above case, it can be said that predictive validity would be the best choice for validating an employment test, as using the work test on existing employees might not be a clear comparison to using the selection tests. The only two potential biasing consequences for the simultaneous validity studies are decreased motivation and range limitation.

Types of Validity

Validity is of four types:

1: Construct Validity

Construct validity assesses whether a measuring tool represents what we want to measure. Consideration of the general validity of a system is important. A construct refers to a term or attribute that can not be directly observed but can be calculated by measuring other related indicators. Constructs can be human traits, such as intelligence, weight, job satisfaction or depression. They can also be wider definitions applicable to organizations or social groups, such as sexual equality, freedom of speech, or corporate social responsibility. Construct validity is about making sure that the measuring system suits the framework you want to calculate. To achieve validity build, you must ensure that your metrics and measurements are carefully constructed based on existing relevant knowledge.

2: Content Validity

Content validity or the validity of the content assesses whether a test is indicative of all aspects of the construct. To produce valid results, the content of a method of testing, surveying or measuring must cover all relevant parts of the subject that it aims to measure. If certain aspects of the calculation are absent (or if irrelevant aspects are included), then the meaning is challenged. Proof of content validity includes the degree to which the test material fits a content domain associated with the build. Proof applicable to the material typically involves an expert in the subject matter (SME) testing products against the test requirements. Before moving to the final implementation of questionnaires, the investigator will review the validity of items against each of the constructs or variables and adjust the measuring instruments accordingly based on the opinion of SMEs.

3: Face Validity

Face validity defines how the substance of a test appears to be correct on the surface. It is very similar to content validity with the exception being that face validity is a more informal and subjective assessment. It is an estimation of whether a test tends to assess a certain criterion; it does not guarantee that phenomena in that domain are measured. Measures may have high validity, but it has poor face validity when the measure does not seem to be evaluating what it is. Nevertheless, when a test subject is prone to faking (malingering) low face validity has the potential to make the test more accurate. Given that one may receive more honest responses with lower face validity, it is sometimes important to make it appear as if there is low face validity while administering the measures. It is often considered as the weakest type of validity because face validity is a subjective measure. In the initial stages of implementing a system, however, it may be useful.

4: Criterion Validity

Criterion validity assesses the similarity of your test to the results of another test. Evidence of criterion validity includes the association between the test and a variable of criterion (or variables) taken as representative of the model. To test criterion validity, you have to determine the similarity between your measurement results and the measurement results. It gives a good indication when there is a high correlation that your test is calculating what it wants to measure. Criterion validity is of two types: concurrent validity and predictive validity. Concurrent validity evidence is when simultaneously the evaluation data and criteria data are obtained. Predictive validity is when and if the test data are first collected to predict criterion data gathered at a later point in time.